Enterprise Data Pipeline Optimization for AI Model Training and Deployment

Learn how to optimize enterprise data pipelines for AI model training and ML deployment. Discover proven strategies for 10x efficiency gains in production environments.

Team

Contributor

Enterprise Data Pipeline Optimization for AI Model Training and Deployment

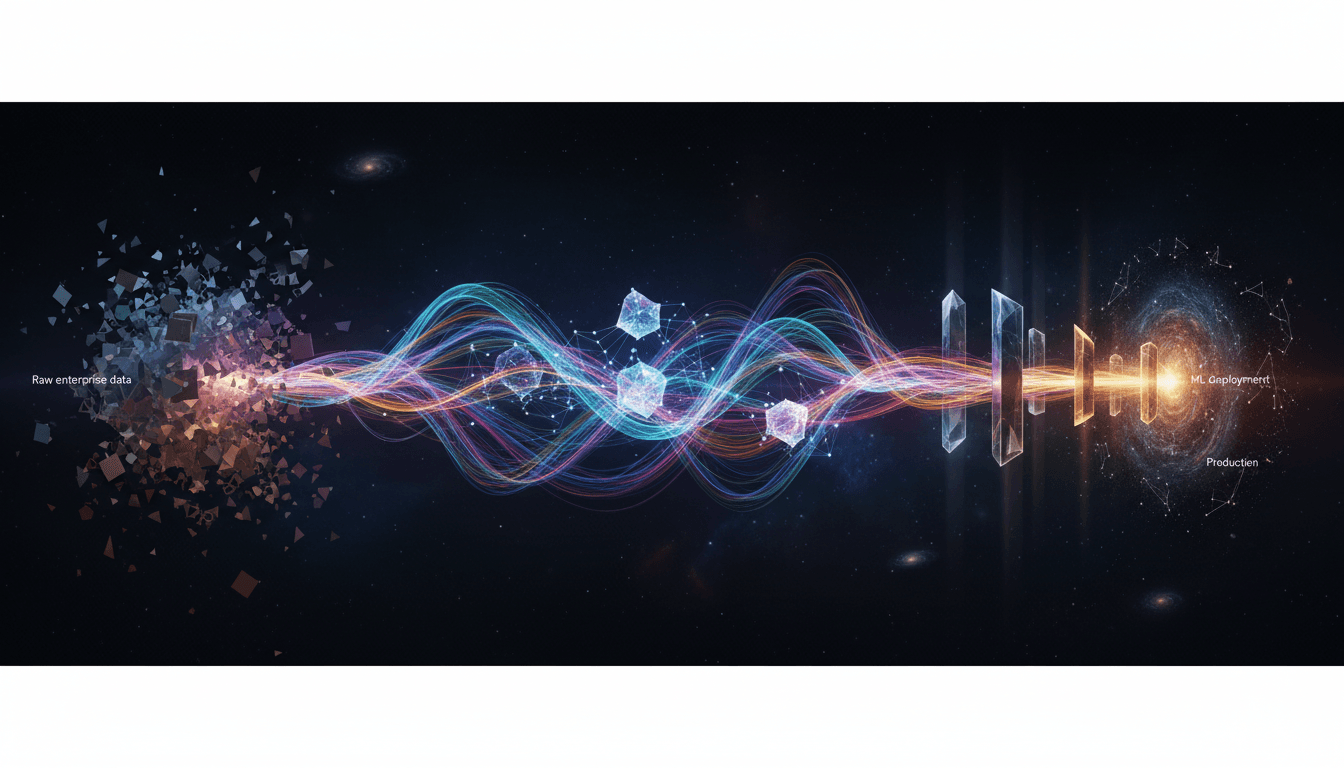

Enterprise data pipeline optimization for AI model training and deployment requires a comprehensive architecture that handles data ingestion, transformation, validation, and versioning at scale. The key to success lies in building resilient, automated pipelines that can process terabytes of enterprise data while maintaining data quality, lineage tracking, and seamless integration with ML deployment workflows—ultimately achieving production-ready systems that deliver measurable ROI within 90 days.

Why Traditional Data Pipelines Fail at Enterprise AI Scale

Most organizations discover that their existing data infrastructure crumbles under the demands of modern AI model training. Traditional ETL pipelines were designed for business intelligence and reporting, not the computational intensity and data volume requirements of machine learning operations. Enterprise data for AI presents unique challenges: streaming data from multiple sources, real-time feature engineering, versioning of both data and models, and the need for reproducible training environments.

The fundamental issue is that AI model training requires data pipelines that can handle both batch and streaming workflows simultaneously. Your sales data might update hourly, customer behavior streams in real-time, and external market data arrives in scheduled batches. A single AI model might need to incorporate all three, requiring orchestration capabilities far beyond conventional data warehousing approaches.

Moreover, data quality issues that might be acceptable for dashboards become catastrophic for ML deployment. A single corrupted field or schema drift can invalidate weeks of training runs. Enterprise-grade solutions must include automated data validation, anomaly detection, and rollback capabilities. As one practitioner discovered while building Influence Craft, a voice-to-social-media content platform, "one of the things we had to overcome was how do we 10x efficiency when it comes to development." This challenge led to discovering that AI transformation requires rethinking entire workflow architectures, not just bolting AI onto existing processes.

Building Scalable Data Ingestion Architectures for AI Workloads

Effective data pipelines for AI model training begin with robust ingestion layers that can handle diverse data sources without becoming bottlenecks. Enterprise environments typically involve dozens or hundreds of data sources: transactional databases, cloud storage, streaming APIs, third-party data providers, and legacy systems. Your ingestion architecture must accommodate structured, semi-structured, and unstructured data while maintaining consistent metadata and lineage tracking.

The most successful implementations adopt a multi-tier ingestion strategy. Raw data lands in a bronze layer with minimal transformation—preserving complete fidelity and enabling future reprocessing. A silver layer applies standardization, deduplication, and quality checks. The gold layer contains curated, feature-engineered datasets optimized for specific AI model training scenarios. This medallion architecture provides flexibility while ensuring data governance.

Stream processing frameworks like Apache Kafka or cloud-native services enable real-time data capture with exactly-once semantics. For AI applications requiring low-latency predictions, streaming pipelines must feed both training and inference systems. Consider a fraud detection model: it needs historical transaction data for training, but real-time transaction streams for production deployment. Your pipeline architecture must serve both needs without duplicating infrastructure.

Change data capture (CDC) mechanisms ensure that updates to source systems automatically propagate through your pipelines. This is critical for maintaining model accuracy—AI models trained on stale data degrade rapidly. Automated CDC with schema evolution handling means your data pipelines adapt as source systems evolve, preventing the pipeline brittleness that plagues many enterprise AI initiatives.

Implementing Data Transformation and Feature Engineering at Scale

Once data flows into your system, transformation and feature engineering become the crucial value-creation steps for AI model training. Raw enterprise data rarely arrives in ML-ready formats. Customer records might be scattered across CRM, support, and billing systems. Product data exists in inventory, catalogs, and user interaction logs. Feature engineering consolidates these fragments into coherent feature vectors that AI models can consume.

The challenge intensifies at enterprise scale. Feature engineering for a single model might require joining tables with billions of rows, computing rolling aggregates over months of historical data, and applying complex business logic. These operations must complete within acceptable timeframes—ideally enabling data scientists to iterate rapidly rather than waiting days for feature pipelines to execute.

Modern approaches leverage distributed computing frameworks like Apache Spark or cloud-native services to parallelize feature computation. However, the real efficiency gain comes from feature stores—centralized repositories of pre-computed features that multiple models can share. Instead of each team recomputing "customer lifetime value" or "product affinity scores," the feature store calculates them once and serves them to all consumers. This dramatically reduces computational waste while ensuring consistency across models.

True 10x efficiency, as experienced practitioners note, "comes from leveraging AI across your entire organization's workflow, not just in isolated use cases." In the context of data pipelines, this means using AI to optimize the pipelines themselves. Automated feature selection, anomaly detection in data quality, and ML-driven pipeline optimization create compound efficiency gains. The same AI capabilities that power your product can accelerate the infrastructure that trains those models.

Orchestrating ML Training Workflows with Production Data Pipelines

ML deployment success hinges on seamlessly integrating training workflows with production data pipelines. The gap between data engineering and data science teams often creates friction—data scientists work with sanitized datasets in notebooks while production systems handle messy real-world data. Bridging this divide requires orchestration platforms that manage both data pipeline execution and model training as unified workflows.

Container-based training environments ensure reproducibility. When a model trains, the orchestration system should capture not just the model artifacts but the complete environment: data versions, code commits, dependency specifications, and hyperparameters. This enables reproducing any historical model exactly, which is essential for debugging production issues and meeting regulatory requirements in regulated industries.

Automated retraining pipelines monitor model performance and trigger retraining when accuracy degrades. Your data pipelines must support versioning so models can train on data snapshots that match specific time periods. This temporal consistency prevents training-serving skew—the notorious problem where models perform well in development but fail in production because the data distributions differ.

For enterprise organizations, orchestration complexity multiplies across teams and use cases. As practitioners have noted, "enterprise clients often need sophisticated multi-team functionality rather than simple single-user solutions. The ability to manage separate campaigns while maintaining unified oversight is a key differentiator for enterprise-grade software." The same principle applies to data pipelines: different teams need isolated development environments, but central governance and shared infrastructure prevent redundancy.

Ensuring Quality, Monitoring, and Continuous Optimization

Production-ready data pipelines for AI model training require comprehensive monitoring and quality assurance that goes far beyond traditional data pipeline metrics. You need visibility into data freshness, completeness, statistical distributions, schema evolution, and downstream model performance—all correlated to identify root causes when issues arise.

Data quality monitoring must be proactive, not reactive. Automated tests validate that incoming data matches expected schemas, falls within acceptable ranges, and maintains expected relationships. For example, if your customer churn model expects feature values within certain distributions, pipeline monitors should alert when incoming data drifts outside those bounds—before models train on corrupted data.

Lineage tracking creates transparency from raw data sources through transformations to final model inputs. When a model misbehaves, data scientists need to trace back through the pipeline to identify whether issues stem from source data problems, transformation bugs, or modeling choices. Without automated lineage, this debugging process consumes weeks.

The compound efficiency gains extend to optimization cycles. At platforms like Influence Craft, AI "doesn't just help with one aspect of business - it creates compound efficiency gains across multiple domains. Not only does AI help 10x building code, building unit tests, and ensuring quality comes first, but it also 10xs the efficiency of creating content and marketing campaigns." Applied to data pipelines, continuous optimization through AI-driven cost monitoring, automatic resource scaling, and intelligent caching ensures that infrastructure costs don't spiral as data volumes grow.

Accelerating Time-to-Value with Enterprise-Grade Solutions

Building enterprise data pipelines for AI model training and ML deployment from scratch requires significant engineering investment. Organizations seeking faster ROI increasingly adopt platforms that provide production-ready infrastructure out of the box. The objective is ensuring all software developed meets enterprise standards while accelerating deployment timelines.

James - Dev Team focuses on this exact challenge: making sure all software developed is up to standard and production ready. This approach recognizes that true enterprise-grade solutions require more than functional code—they need comprehensive testing, quality assurance, seamless integration capabilities, and proven deployment patterns that deliver ROI within 90 days.

The modern approach emphasizes end-to-end solutions rather than point products. Organizations that once assembled dozens of tools now seek integrated platforms where data ingestion, transformation, feature engineering, model training, and deployment work together seamlessly. This integration eliminates the technical debt and maintenance burden that accumulates when stitching together disparate systems.

For companies serious about AI transformation, the question isn't whether to optimize data pipelines—it's whether to build or adopt proven solutions. Building custom infrastructure makes sense when your requirements are truly unique, but most organizations benefit from leveraging enterprise-grade platforms that embody best practices, enabling teams to focus on model development and business value rather than infrastructure maintenance.

Conclusion: From Data Chaos to AI Excellence

Enterprise data pipeline optimization for AI model training and ML deployment represents the foundation of successful AI transformation. Organizations that invest in scalable ingestion, automated feature engineering, orchestrated training workflows, and comprehensive monitoring position themselves to extract maximum value from AI investments. The key is recognizing that isolated improvements deliver minimal impact—true 10x efficiency comes from comprehensive workflow transformation.

Whether you're building from scratch or adopting enterprise-grade solutions, prioritize production readiness, seamless integration, and measurable business outcomes. The organizations winning with AI aren't necessarily those with the most sophisticated models—they're the ones with the infrastructure to train, deploy, and continuously improve models at scale. Start with your data pipelines, ensure they meet enterprise standards, and the path to AI excellence becomes clear.

Ready to transform your enterprise data infrastructure for AI success? Ensure your development meets production standards from day one with solutions designed for enterprise-grade AI deployment.

Share

Related Articles

Best Multi-Channel Marketing Campaign Management Tools for Enterprise Teams in 2026

Best Multi-Channel Marketing Campaign Management Tools for Enterprise Teams in 2026

Top AI Tools Transforming Lead Generation for Marketing Agencies in 2026

Discover the top AI lead generation tools transforming marketing agencies in 2026 — from agentic workflows to voice-to-pipeline platforms that scale results fast.